"There are three kinds of lies: lies, damned lies, and statistics."- Mark Twain

A new paper published in a journal called "Climate Risk Management" claims a ridiculous degree of "certainty" of 99.999% that global warming over the past 25 years is man-made. The claim is made based upon climate models already falsified at confidence levels of 98%+.

According to the authors,

"there is less than a one in one hundred thousand chance of observing an unbroken sequence of 304 months [25.3 years] (our analysis extends to June 2010) with mean surface temperature exceeding the 20th century average."Fundamental problems with this claim [which is basically the falsified IPCC attribution claim of 95% certainty on steroids] include:

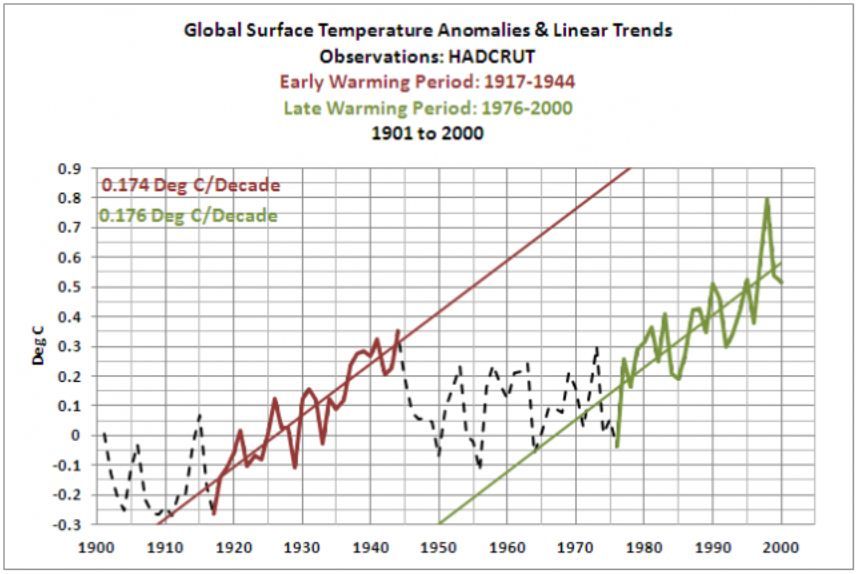

- There is no statistical difference between the rate of warming over the 27 years from 1917-1944 and the 25 years from 1975/1976 to 2000:

- "Climate models fail to simulate the [natural with 99.999% certainty] observed warming between 1910 and 1940. Not being able to address the attribution of change in the early 20th century to my mind precludes any highly confident attribution of change in the late 20th century." - Judith Curry

- "climate models are not fit for the purpose of detection and attribution of climate change on decadal to multidecadal timescales." -Judith Curry

- Statistically significant global warming of the surface stopped 19 years ago and in the troposphere [which climate models claim should warm faster than the surface] global warming stopped 16-26 years ago

- Over 40 excuses for the 18-19 year "pause" in surface warming indicate that natural climate variability is far greater than climate models simulate, and is capable of overwhelming any climate influence of CO2

- Much of the warming of the past 25 years may be artificial due to urban heat island effects and extensive up-justing of temperature records long after the fact

- The paper uses climate models falsified at confidence levels exceeding 98%, thus the assumptions and conclusions derived from models are invalid

- The models also did not predict the 18-19 year "pause" in global warming, thus are not valid to determine attribution to natural vs. anthropogenic causes

- Climate models are also unable to simulate natural warming during prior interglacials, which were warmer than the present, another reason why they cannot be used to rule out that the past 25 years of warming is unnatural or man-made

- Additionally, climate models do not properly simulate solar amplification mechanisms, ocean oscillations, convection, clouds, atmospheric circulations, gravity waves, etc. and thus cannot be used to exclude these natural factors as potential causes of warming

- The model used by the paper assumes only solar total irradiance adequately describes solar forcing of climate, ignoring large changes in the solar spectrum and solar amplification mechanisms. In addition, a simple integral of solar activity does explain most of the known climate change over the past 400 years.

Thus, this new paper is not even wrong with 99.999% certainty

|

| Assumed climate model forcings for CO2, solar TSI, Southern Oscillation Index [SOI] and volcanic. |

|

| Upper right graph uses the same falsified technique of IPCC of comparing climate models assuming no change in CO2 [black] with increased CO2 [blue]. |

A probabilistic analysis of human influence on recent record global mean temperature changes

- DOI: 10.1016/j.crm.2014.03.002

Abstract

December 2013 was the 346th consecutive month where global land and ocean average surface temperature exceeded the 20th century monthly average, with February 1985 the last time mean temperature fell below this value. Even given these and other extraordinary statistics, public acceptance of human induced climate change and confidence in the supporting science has declined since 2007. The degree of uncertainty as to whether observed climate changes are due to human activity or are part of natural systems fluctuations remains a major stumbling block to effective adaptation action and risk management. Previous approaches to attribute change include qualitative expert-assessment approaches such as used in IPCC reports and use of ‘fingerprinting’ methods based on global climate models. Here we develop an alternative approach which provides a rigorous probabilistic statistical assessment of the link between observed climate changes and human activities in a way that can inform formal climate risk assessment. We construct and validate a time series model of anomalous global temperatures to June 2010, using rates of greenhouse gas (GHG) emissions, as well as other causal factors including solar radiation, volcanic forcing and the El Niño Southern Oscillation. When the effect of GHGs is removed, bootstrap simulation of the model reveals that there is less than a one in one hundred thousand chance of observing an unbroken sequence of 304 months (our analysis extends to June 2010) with mean surface temperature exceeding the 20th century average. We also show that one would expect a far greater number of short periods of falling global temperatures (as observed since 1998) if climate change was not occurring. This approach to assessing probabilities of human influence on global temperature could be transferred to other climate variables and extremes allowing enhanced formal risk assessment of climate change.

This is another successful attempt to show that if you assume that there would have been no background warming then you can conclude that there would have been no background warming — and with great confidence and a low p-value.

ReplyDeleteYou misunderstood the paper - it does not use climate models.

ReplyDeleteWhat part of "We construct and validate a time series model of anomalous global temperatures to June 2010, using rates of greenhouse gas (GHG) emissions, as well as other causal factors including solar radiation, volcanic forcing and the El Niño Southern Oscillation. When the effect of GHGs is removed, bootstrap simulation of the model reveals that there is less than a one in one hundred thousand chance of observing an unbroken sequence of 304 months (our analysis extends to June 2010) with mean surface temperature exceeding the 20th century average." do you not understand?

DeleteThey do use a simple "bootstrap model"

Analysis: Climate scientists have not used proper procedures to determine if global warming is attributable to CO2

ReplyDeletehttp://judithcurry.com/2014/10/23/root-cause-analysis-of-the-modern-warming